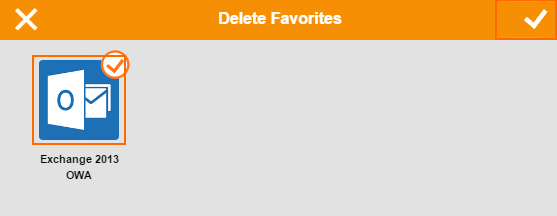

CudaLaunch is designed from ground up for Zero Touch Provisioning and easy. Throw std::runtime_error("Cooperative Launch is not supported on this machine configuration. Access control policies are inherited from Barracuda CloudGen Firewalls, which provide a single place to manage unified security policy across all types of remote access, including CudaLaunch, SSL VPN, Barracuda Network Access Client, and standard IPsec VPN connections. If( cudaSuccess != cudaDeviceGetAttribute(&supportsCoopLaunch, cudaDevAttrCooperativeLaunch, dev) ) Instead of printing values (10,11) twice, I get: thread=0: 10 0Īll cuda calls were fine, cuda-memcheck is happy, my cards is "GeForce RTX 2060 SUPER" and it does support cooperative kernels work, checked with: int supportsCoopLaunch = 0 An integrated demo environment allows you to try out the application before connecting to your organisation’s. The application does this by securely connecting to a Barracuda CloudGen Firewall hosted by your organisation. It may be the problem in your case, try to remove ProfilerActivity. For more information, see CloudGen Firewall Conguration for CudaLaunch. Printf("thread=%d: %g %g\n",(int)grid.thread_rank(),buf,buf) The CudaLaunch application provides secure remote access to your organisation's applications and data from your iOS Device. Congure the services and features you want to use in CudaLaunch. Eine eingebaute Demo-Umgebung ermöglicht Ihnen das Ausprobieren der Applikation, ehe Sie sich mit dem Netzwerk Ihrer Organisation verbinden. To relaunch the VPN App in the default browser, click Relaunch.

I have a very simple kernel where two threads update an array, sync and both print the array: #include īuf = 10+grid.thread_rank() iPhone Die CudaLaunch-Applikation verbindet sich auf eine Barracuda Next Gen Firewall, die von Ihrer Organisation gehostet wird. TVMError: Check failed: ret = 0 (-1 vs.I am trying to understand how to synchronize a grid of threads with cudaLaunchCooperativeKernel. Unlike browser-based remote access techniques, CudaLaunch offers a more responsive interface and a richer user experience across mobile platforms. (0) /home/xxx/project/tvm-debug/build/libtvm.so(dmlc::LogMessageFatal::~LogMessageFatal()+0x32) įile "/home/xxx/project/tvm-debug/src/runtime/cuda/cuda_", line 215įile "/home/xxx/project/tvm-debug/src/runtime/module_", line 73 Provision VPN Connections via VPN Group Policies in the SSL VPN Service By adding group-policy-based VPN templates to your CloudGen Firewall SSL VPN resources, you can let end users connect to the firewall via VPN connection from their Android or iOS devices. CudaLaunch uses Barracuda’s own proprietary TINA1 VPN protocol providing additional security and performance. (1) /home/xxx/project/tvm-debug/build/libtvm.so(tvm::runtime::CUDAWrappedFunc::operator()(tvm::runtime::TVMArgs, tvm::runtime::TVMRetValue*, void**) const+0圆62) (2) /home/xxx/project/tvm-debug/build/libtvm.so(std::_Function_handler(tvm::runtime::CUDAWrappedFunc, std::vector > const&)::>::_M_invoke(std::_Any_data const&, tvm::runtime::TVMArgs&, tvm::runtime::TVMRetValue*&)+0xbc) (3) /home/xxx/project/tvm-debug/build/libtvm.so(TVMFuncCall+0圆1) When code starts running, the GPU code will physically send to GPU and launch on GPU. The worst case may come from the first time kernel launch. It has been specially designed for mobile and BYOD devices, and it connects to a Barracuda CloudGen Firewall. Tvm._: Traceback (most recent call last): The average execution time of cudaLaunch is around us. CudaLaunch is an application for iOS, Android, Windows, and macOS created for remote workers requiring secure and reliable access to your business. I got a error like: Traceback (most recent call last):įile "onnx_resnet18_fp16.py", line 56, in įile "/home/xxx/project/tvm-debug/python/tvm/contrib/graph_runtime.py", line 168, in runįile "/home/xxx/project/tvm-debug/python/tvm/_ffi/_ctypes/function.py", line 210, in _call_ Module = graph_runtime.create(graph, lib, ctx) 3.0 2 Ratings Free Screenshots iPad iPhone The CudaLaunch application provides secure remote access to your organisations applications and data from your iOS Device. Graph, lib, params = relay.build_module.build( With relay.build_config(opt_level=opt_level): Conversely, with small arrays, the parallel version might be slower than the serial version. With autotvm.apply_history_best(log_file): It use more resource and more time during network multi dropping.

Sym, params = _onnx(onnx_model, shape_dict,dtype="float16") It is very slow during running multi programming time as compare on normally office work. I am preparing to do float16 inference with tvm, I load a resnet-18 model from onnx,the code is onnx_model = onnx.load('models/resnet18_half.onnx')

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed